Table Of Contents

In part 2 I wanted to fix the problem with the loadbalancer from part 1. The loadbalancer did not actually function as a loadbalancer it just proxy the request to the correct webserver.

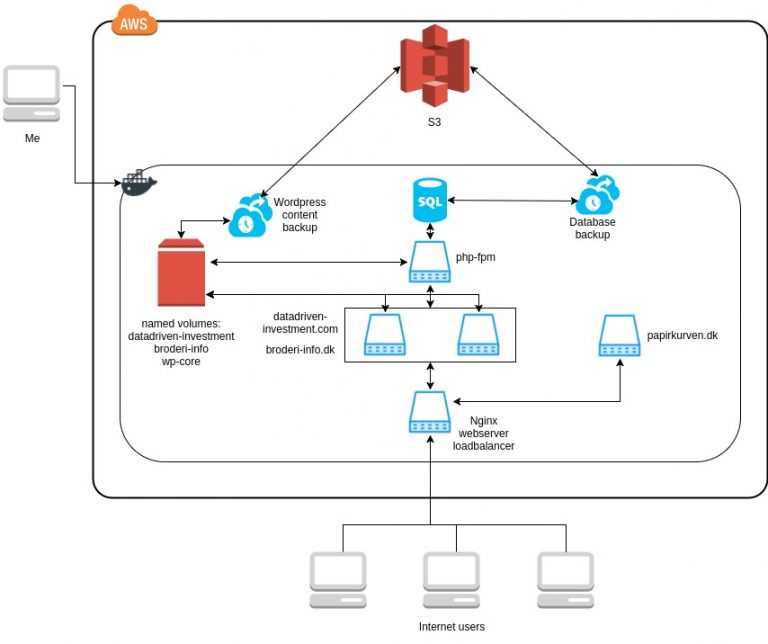

The new setup is shown in the diagram below.

The changes from part 1 are

- The web servers in the middle of the diagram are now both serving requests for datadriven-investment.com and broderi-info.dk load balancing between the servers

- The php-fpm process is now split into a separate server where before it was rolled into the web servers

- The wordpress core files (wp-core) are now loaded using a one-off docker container that boots, extracts the wordpress files into the named volume and exits afterwards.

- To centralize the wordpress files I needed to make a few changes to the config file in wordpress, this is explained below.

Learning points

Initially I thought of docker containers as ordinary servers. This lead to the setup of a single container with nginx, php-fpm and wordpress from part 1. I have now found out that this is the wrong way to do it.

Each container should have a single responsibility running a single process. When running a single process in a container, if the process exits the container will stop and docker will know that something is up and take action.

This is build into docker and you can configure which actions to take. Running a single process also allows it to log to stdout instead of a logfile, where it is possible to configure docker to where the logging should go.

If a container runs for example two processes like the webservers from part 1, a nginx and a php-fpm process. One of them needs to run in the background. If the background process terminates, docker will not know since the container still runs with the other process. This makes error handling much harder. So as far as possible I will try to setup containers with a single process so it will make the infrastructure easier to understand and easier to work with for docker.

I was thrown off by the concept of docker data containers which is a way to make it possible for different containers share data. This concept was superseded by named data volumes. But it took me a while to understand that it would allow me to do what I wanted.

The setup with volumes are further explained as part of the wordpress setup.

Infrastructure setup in docker-compose looks like the file below:

version: "2"services:############## loadbalancer ################loadbalancer:build: loadbalancerports:- '80:8080' # map container port to host for external accesslinks:- papirkurven- http1- http2restart: alwaysfileserver:build: fileservervolumes:- wp-core:/var/wordpress/############ DB server ###################db:image: mariadbvolumes:- db-data:/var/lib/mysqlrestart: alwaysenvironment:- MYSQL_ROOT_PASSWORD=xxxbackup-db:image: candyline/mysql-backup-cronenvironment:- MYSQL_ROOT_PASSWORD=xxx- MYSQL_HOST=db- BACKUP_DIR=/var/backups/- CRON_D_BACKUP="0 6 * * * root /backup.sh | logger"- DAILY_CLEANUP=1- MAX_DAILY_BACKUP_FILES=30- STORAGE_TYPE=s3- REGION=eu-west-1c- ACCESS_KEY=xxx- SECRET_KEY=xxx- BUCKET=s3://sqlbackup.patch.dk/backup-files:image: istepanov/backup-to-s3environment:- ACCESS_KEY=xxx- SECRET_KEY=xxx- S3_PATH=s3://sqlbackup.patch.dk/wp-content/- DATA_PATH=/data/volumes:- datadriven-investment-data:/data/datadriven-investment:ro- broderi-info-data:/data/broderi-info:ro#################### WEBSITES ############papirkurven:build: papirkurvenrestart: alwayshttp1:build: httpdrestart: alwayslinks:- phpvolumes:- wp-core:/var/www/datadriven-investment.com/:ro- wp-core:/var/www/broderi-info.dk/:ro- datadriven-investment-data:/var/www/datadriven-investment.com/wp-content:ro- broderi-info-data:/var/www/broderi-info.dk/wp-content:rohttp2:build: httpdrestart: alwayslinks:- phpvolumes:- wp-core:/var/www/datadriven-investment.com/:ro- wp-core:/var/www/broderi-info.dk/:ro- datadriven-investment-data:/var/www/datadriven-investment.com/wp-content:ro- broderi-info-data:/var/www/broderi-info.dk/wp-content:rophp:build: php-fpmlinks:- dbrestart: alwaysvolumes:- wp-core:/var/www/datadriven-investment.com/:ro- wp-core:/var/www/broderi-info.dk/:ro- datadriven-investment-data:/var/www/datadriven-investment.com/wp-content # write allowed- broderi-info-data:/var/www/broderi-info.dk/wp-content # write allowed############## Data persisted on host #######volumes:db-data: # database fileswp-core: # wordpress coredatadriven-investment-data: # wordpress modules / themesbroderi-info-data: # wordpress modules / themes

Wordpress setup

To load a wordpress site we need to execute scripts to generate the html to display the page, and to request static files like images and css files. This requires both the webservers and the php-fpm server to have access to the wordpress files.

In part 1 the http server was booted using an image where the wordpress files where part of the server it self, and the custom files in wp-content was mounted onto the server. This has changed a bit. Now we have the named volume “wp-core”, when the service “fileserver” boots up it mounts the wp-core volume, extracts a tar.gz file with the wordpress files into this volume, overwriting any existing files.

This volume is then mounted read only on http1, http2 and php. The core files are mounted for both websites in separate folders.

This allows php to serve the php script requests, and http1 and http2 to serve the requests for static files.

Afterwards the wp-content volume for each site, “datadriven-investment-data” and “broderi-info-data” are mounted inside the mounts for wp-core.

Now the only problem is that wordpress expect to find a wp-config.php with database connection info in the root directory of the website, this is where the wp-core files are located.

Since each site needs a different wp-config.php it messes with the setup. But it is easy to fix. The original wp-config.php are moved to the wp-content folder. And a simple wp-config.php are added to the wp-core folder.

<?phprequire_once __DIR__."/wp-content/wp-config.php";

Now any request will load the config file from the wp-content folder instead. This also has the side effect that now the wp-config.php are located in the wp-content folder causing all custom files on the site to be in a single folder. Making backup easy.

When ever a new wordpress version gets released, I just needs to update the wordpress.tar.gz in my fileserver docker image and deploy the image.

Nginx load balancing setup

When having a setup where the webservers can serve requests for any site the loadbalancing setup is quite easy.

upstream datadriven-investment-loadbalance {server http1;server http2;}server {listen 8080;server_name datadriven-investment.com;location / {proxy_set_header Host $host;proxy_set_header X-Forwarded-For $remote_addr;proxy_pass http://datadriven-investment-loadbalance;}}

We define an upstream group of servers to loadbalance between. And uses this group in the proxy_pass option. Then nginx handles the rest. Default mode is round robin, so it switches between the servers for each request.

Pros and Cons with this setup

I’m not particular happy with the deployment process, it is very primitive, I just issue a docker-compose up which does a shutdown of all containers and boots them again with new images. But this causes downtime when it is deploying.

I will need to research more on how to construct a better process.

The php-fpm server is not load balanced which is a weak point in the setup since it is here most of the load will be.

Since php needs session data, at least for wordpress admin we need a share session state between all the php-fpm servers. This will require a different session setup to load balance this part compared to the webservers which does not need to have any shared state.

If a webserver dies there are not added anything in the loadbalancer to handle it. So it will start getting timeouts on ½ of the requests.

I’m getting happy with the wordpress setup, now all the custom part of wordpress are centralized into a single directory making it easy to backup and manage.

The two webservers are identical but are defined as two different services in the docker-compose file, I guess this is not an optimal way to do it since load balancing between 2 servers are ok to have in the docker-compose file, but with much more it will get bloated.

Also the names of the webservers are hardcoded into the nginx configuration files, so some kind of service discovery are also needed.

This needs more research.

Next steps

Improvement on the deployment process is a must for the next step.

Share

Related Posts

Legal Stuff